By: Srikanth Saripalli, Texas A&M University

In early November, a self-driving shuttle and a delivery truck collided in Las Vegas. The event, in which no one was injured and no property was seriously damaged, attracted media and public attention in part because one of the vehicles was driving itself – and because that shuttle had been operating for only less than an hour before the crash.

In early November, a self-driving shuttle and a delivery truck collided in Las Vegas. The event, in which no one was injured and no property was seriously damaged, attracted media and public attention in part because one of the vehicles was driving itself – and because that shuttle had been operating for only less than an hour before the crash.

It’s not the first collision involving a self-driving vehicle. Other crashes have involved Ubers in Arizona, a Tesla in “autopilot” mode in Florida and several others in California. But in nearly every case, it was human error, not the self-driving car, that caused the problem.

In Las Vegas, the self-driving shuttle noticed a truck up ahead was backing up, and stopped and waited for it to get out of the shuttle’s way. But the human truck driver didn’t see the shuttle, and kept backing up. As the truck got closer, the shuttle didn’t move – forward or back – so the truck grazed the shuttle’s front bumper.

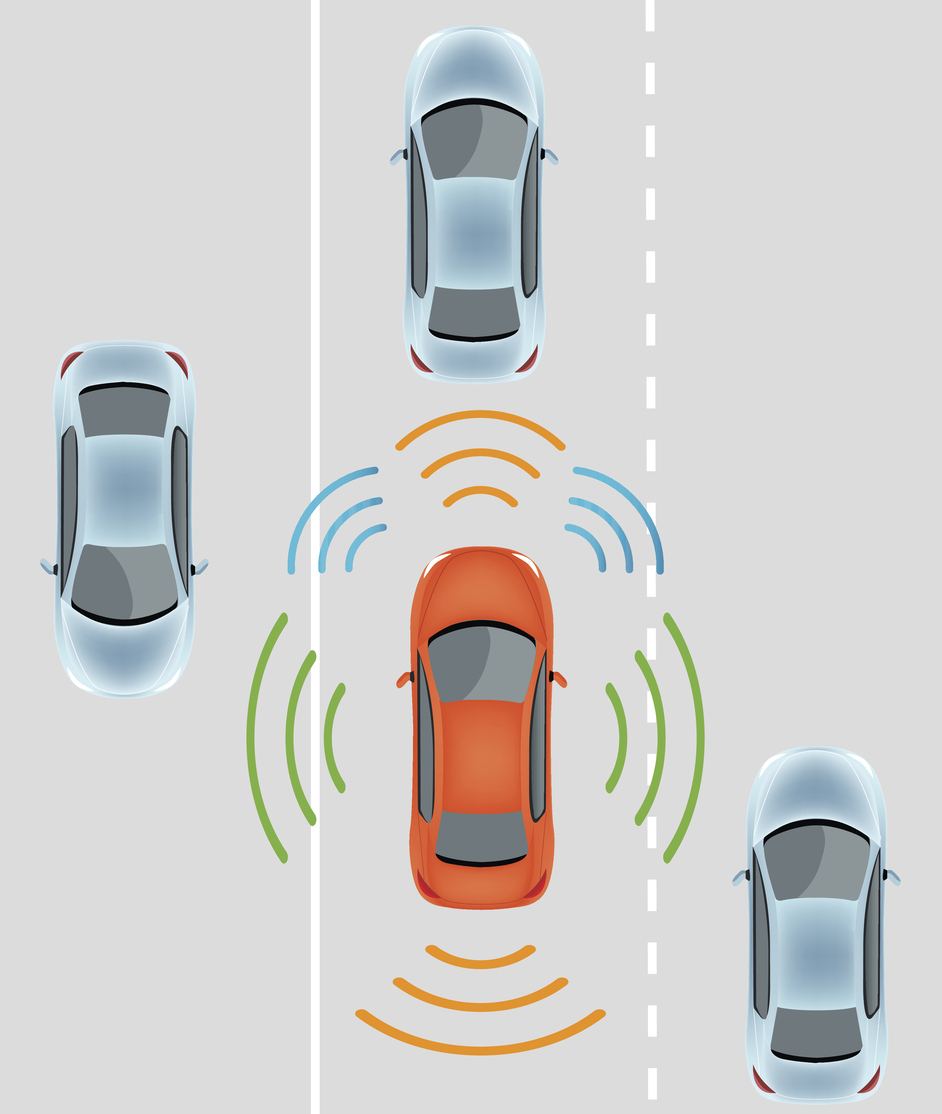

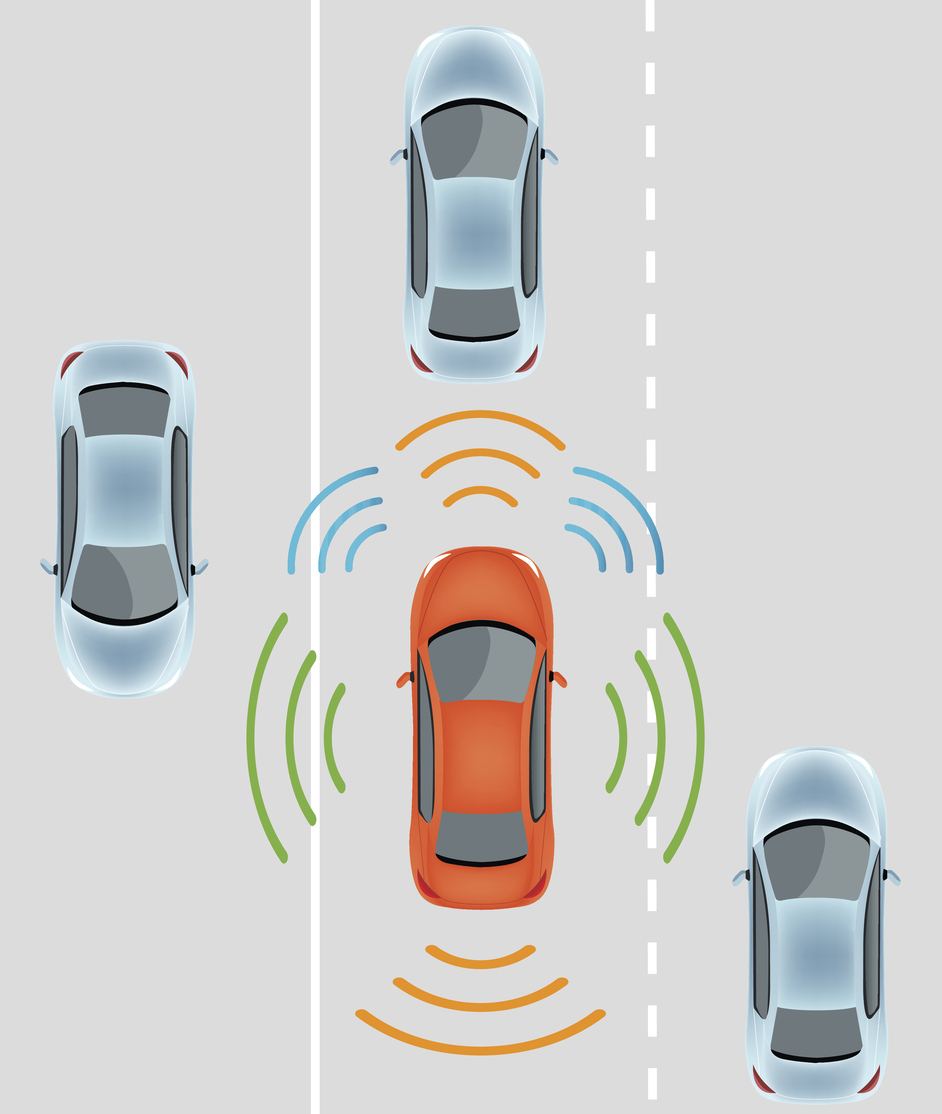

As a researcher working on autonomous systems for the past decade, I find that this event raises a number of questions: Why didn’t the shuttle honk, or back up to avoid the approaching truck? Was stopping and not moving the safest procedure? If self-driving cars are to make the roads safer, the bigger question is: What should these vehicles do to reduce mishaps? In my lab, we are developing self-driving cars and shuttles. We’d like to solve the underlying safety challenge: Even when autonomous vehicles are doing everything they’re supposed to, the drivers of nearby cars and trucks are still flawed, error-prone humans.

(more…)

Every day about

Every day about